Over the Christmas week, CapX is republishing its favourite pieces from the past year. You can find the full list here.

It was hard not to detect a defensive, even plaintive, tone in Mark Zuckerberg’s open letter to Facebook users this weekend.

“After the election, many people are asking whether fake news contributed to the result,” he acknowledged.

But that’s not what they were asking at all, and Zuckerberg knows it.

Yes, Facebook (and the internet more generally) has a big problem with fake news. If you haven’t read it, I highly recommend this piece by Craig Silverman of BuzzFeed on the Macedonian town which devoted itself to pumping out fictitious pro-Trump propaganda in order to harvest the ad revenue. Or this round-up of the obviously fake stories that made their way into Facebook’s “trending” story box after it fired its human editors.

But of far more concern than fake news is slanted news – the fact that Facebook and other websites had fed everyone news so perfectly tailored to their personal tastes that it had divided America neatly into two.

The half that liked stories from the New York Times not only had nothing to say to the half that retweeted Breitbart, but wasn’t really aware that it was out there. And the shock of Donald Trump’s victory was redoubled by the fact that it was brought about by an America that many people barely realised still existed.

For those of us in Britain, it was a familiar sensation. On the night of the 2015 general election, young people who had genuinely convinced themselves that Ed Miliband was about to become Prime Minister were shell-shocked to discover that their elders, absent from social media, had voted en bloc for the Tories.

Likewise, after Brexit, there was the crunching psychological shock of one half of the country (well, 48 per cent of it) realising that the other 52 per cent actually existed.

What they had become trapped in was what Eli Pariser, in his book of the same name, describes as a “filter bubble” – a state of affairs in which Facebook, or the internet more broadly, shows you only what you want to see, to the point where you start to believe that it is all there is.

This isn’t political – it’s entirely accidental. The highest goal of any social network is to maximise usage. That means that if you like something – say a post on why Hillary Clinton deserves to be locked up – it will show you more of the same, and fewer pieces arguing that she’s actually a deeply misunderstood public servant.

The result is the situation depicted by the Washington Post’s “Red Feed, Blue Feed” experiment. It shows the coverage you’d get of particular issues if you had a generically Democratic Facebook feed and a generically Republican one. They are, needless to say, completely antithetical.

The alarming thing about this isn’t so much that people have divergent opinions – it’s what happens next.

It’s a truism of social science that when people form into groups, those groups will become more extreme. We perceive others to be more passionate about an issue than we are, so intensify our own commitment in order to cement our place in the group.

That’s actually one of the recruitment techniques used by extremist organisations – but it applies just as well to fan clubs or interest groups of any kind. The more you talk to others about something, the more that issue comes to define you, and the more intense your feelings about it get.

In many ways, this isn’t actually about the internet at all, but about everyday life. As research by Matthew Gentzkow and Jesse Shapiro has shown, online interaction is actually much less polarised and biased than everything else around us.

In a recent interview with CapX about his new book, Tim Harford talked about our tendency – when given the chance – to cluster with people like ourselves. Increasingly, today, like talks to like and like marries like – certainly among the well-educated elites.

The result, as Gentzkow and Shapiro point out, is that our social lives and workplaces become deafening echo chambers even without Facebook’s contribution.

For example, one of the best known illustrations of Facebook’s filter bubbling came from the internet activist Tom Steinberg, who went looking on his Facebook feed – in the wake of the Brexit vote – for the 52 per cent of the public who must theoretically be celebrating. He couldn’t find them.

But by its nature, that’s a feed of people he mostly knew from the real world. In this instance, Facebook isn’t distorting their views – it’s revealing their herd mentality.

What has changed in terms of the media, at any rate, is that this tendency towards separation used to be counteracted by the dominance of certain voices: the big TV networks in the US, the BBC in the UK.

These defined the contours and context of “normal” political discussion, the boundaries for what was considered acceptable opinion. There was also a much great willingness to accept the word of established authority, of every kind.

Today, that situation has broken down. We live in an age of exhilarating yet often overwhelming media entrepreneurship, when those of every stripe can make their voices heard.

And this has consequences far beyond the media, or even politics.

The free market is, at its simplest, a beautiful and incredibly powerful exercise in trust. Every time we buy something from someone we don’t know – or, in the case of the internet, from someone we can’t even see – we are trusting that the goods will be as described, that the sale will go through, that if things go wrong there are methods of redress.

But it goes deeper than that. Trust is, as Eric Uslaner puts it, “the chicken soup of social life”. Countries where trust is high – in government, in politicians, in our fellow citizens – tend, as a rule, to be more pleasant and prosperous places.

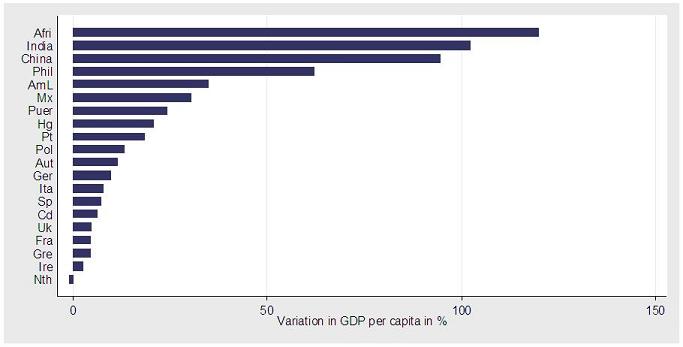

Stephen Knack and Philip Keefer have shown that high trust is closely correlated with economic growth. Indeed, if the UK had Scandinavian levels of mutual trust, GDP per capita would now be 5 per cent larger – and that of Africa and India would be double the size.

The problem in Africa and India, of course, is corruption. The problem in the West, by contrast, is corrosion.

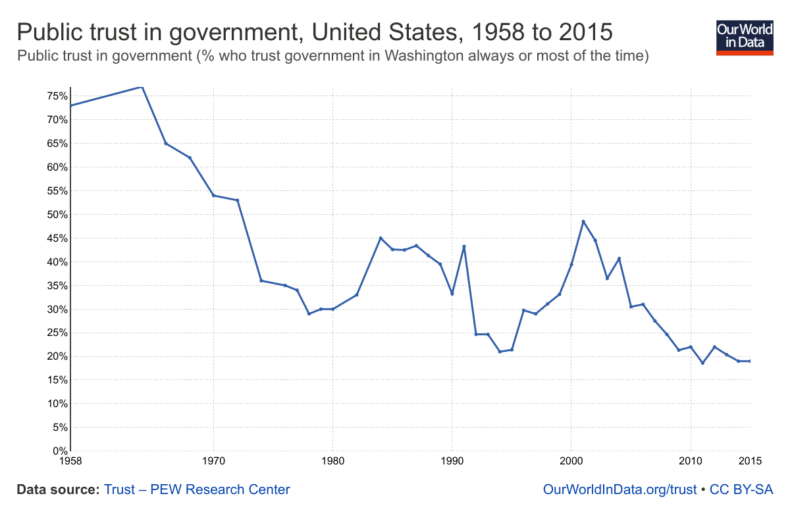

For example, we all know people trust their governments less than they used to:

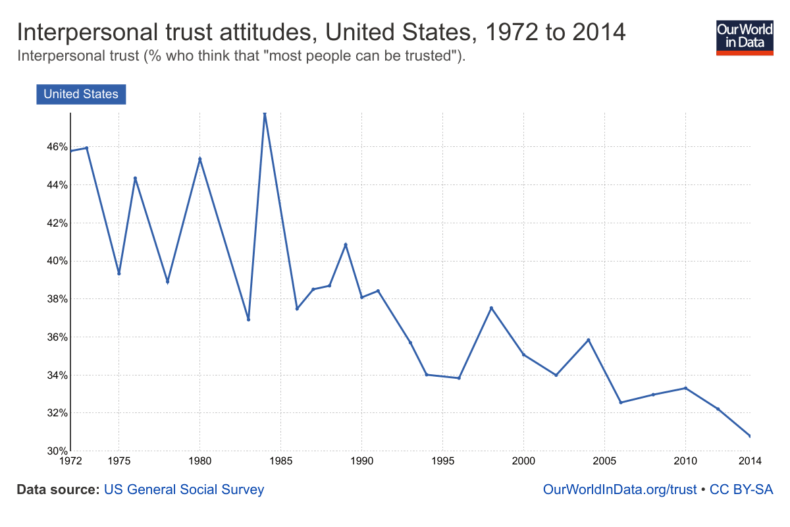

But they also trust each other less, too – especially in the United States:

Given how long this process has been going on for, we obviously cannot blame Mark Zuckerberg for all of it, or even most of it. But it is hard not to notice the way the line has been curving downward more steeply in the age of personalised digital media – an erosion of mutual faith that seems relatively impervious to economic factors.

The rise of identity politics necessarily goes hand in hand with a loss of trust. If we do not see our fellow citizens as fellow citizens but as members of separate and even hostile tribes – and if we see ourselves that way too – then we are likely to be more sceptical, cynical and distrustful when it comes to dealing with those outside our social circle.

The problem with Facebook, and the social web more generally, isn’t just that it reinforces those tribal allegiances, but that it blinds us to their peculiarity.

In a world in which everyone agrees with you about Brexit, or Donald Trump, or the cuteness of a particular boy band member, it is a jarring shock to come across someone who doesn’t, let alone someone who holds a contradictory view with equal and opposite force.

So what can be done? Zuckerberg insists that “we”, by which he presumably means Facebook, “must be extremely cautious about becoming arbiters of truth ourselves”.

It’s true that no one wants Facebook as the world’s editor-in-chief. But as I’ve argued elsewhere, it’s kind of assumed the position by default.

Facebook should obviously do everything it can to weed out fake news – even it means re-hiring a few human beings.

But in terms of the news feed itself, as its algorithms get better at showing us what we want to see, there is a growing case for saying that it should also show us what we need to see – that having a functioning, trusting society is worth the fractional drop in engagement that would result.

I’m not talking about an online version of the Fairness Doctrine, in which every pro-Trump story on a newsfeed has to be balanced by a pro-Hillary one. But it is surely possible for some signal to be given that those stories at least exist, for there to be an indication that what you are reading is not a matter of settled fact but the topic of contestation and dispute.

“I think it’s important to try to understand the perspective of people on the other side,” said Zuckerberg in his platitudinous non mea culpa. But how can you do that if you don’t even know they’re out there?